- Check it out in an example survey!

- Add a survey with this question to your account!

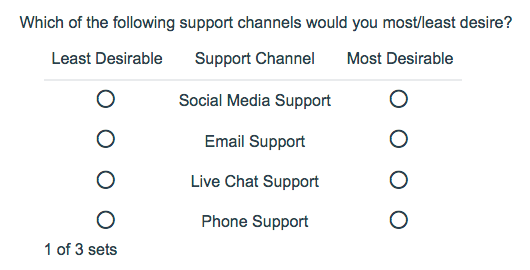

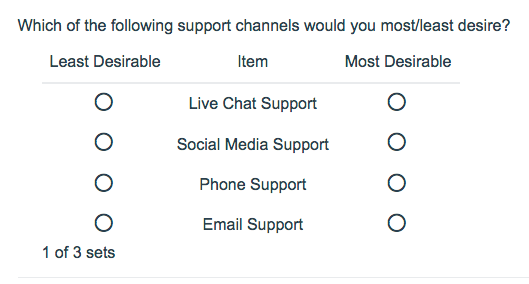

Using the Max Diff question type (aka maximum difference scaling) respondents are shown a set of the possible attributes and are asked to indicate the best and worst attributes (or most and least important, most and least appealing, etc.).

When to use it

Max Diff is an alternative to standard rating scale results that might lead you to believe everything is important. By forcing respondents to make choices between options, Max Diff delivers results that show the relative importance of the items being rated.

Consider the above example in which a respondent evaluates four fruits: apples, oranges, bananas, and pears.

If the respondent says that apples are best and pears are worst, these two responses inform us on five of six possible paired comparisons:

- apples vs. oranges,

- apples vs. bananas,

- apples vs. pears,

- oranges vs. pears,

- bananas vs. pears

The only paired comparison that cannot be inferred is oranges vs. bananas. As you can see, this produces better data than a standard ranking question. And people are much better at judging items at extremes than in discriminating the importance of items in the middle.

The Max Diff question type has the added benefit of showing random sets of attributes so respondents are not evaluating all attributes at the same time, which is really hard for people to do. And, by displaying the same attributes several times, you gather even more robust data.

What is an attribute? - An attribute is the property of the object, product, brand, service or advertisement that you are comparing.

What is a set? - A set is a randomly selected group of attributes.

Setup

- Click the Question link on the page where you would like to add your Max Diff question.Max Diff questions must be on a page by themselves on the Build tab as the respondent will need to use the next button to submit each set.

- Select Max Diff from the Question Type dropdown and enter the question you wish to ask.

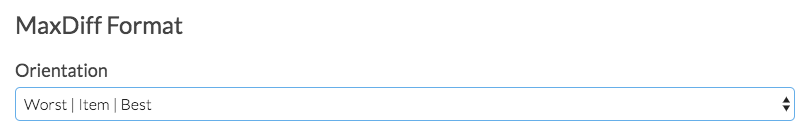

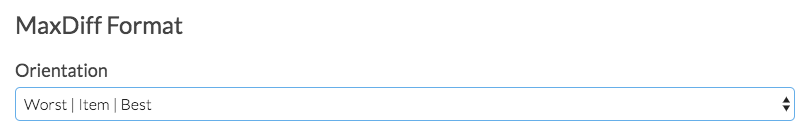

- Under the MaxDiff Format section, select the Orientation you desire.

Best Practice Tip: Orientation

We recommend the Worst | Item | Best layout option. We have found that this best conveys the task at hand. Survey respondents can easily comprehend that you would like for them to compare the attributes and select one for the each column. In addition, worst/least to best/most is a standard layout that most respondents will expect (this is for left-to-right languages).

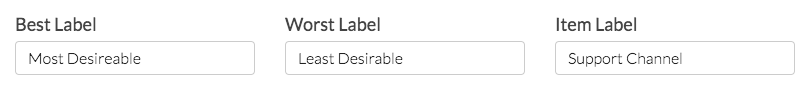

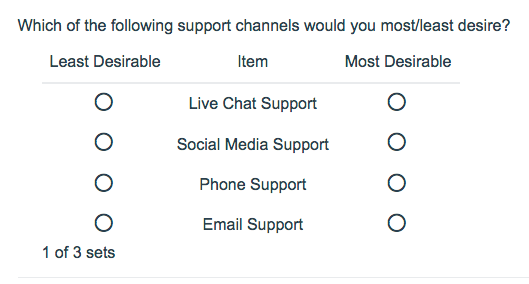

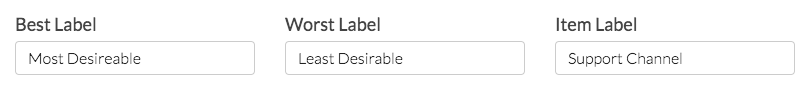

- Next, you can also customize the Best and Worst Labels, as well as the Label for the Items respondents are evaluating.

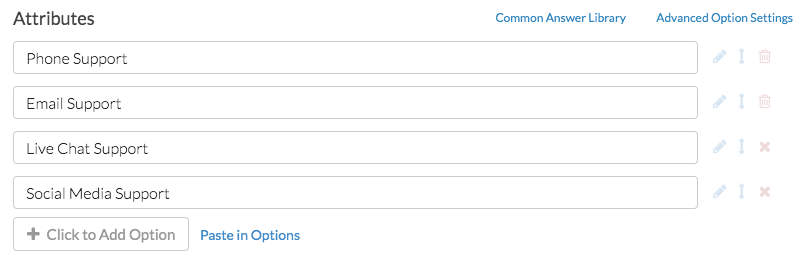

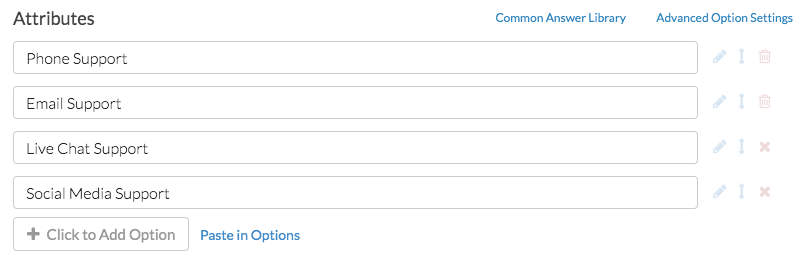

- Next, scroll down and add the attributes you would like your respondents to evaluate.

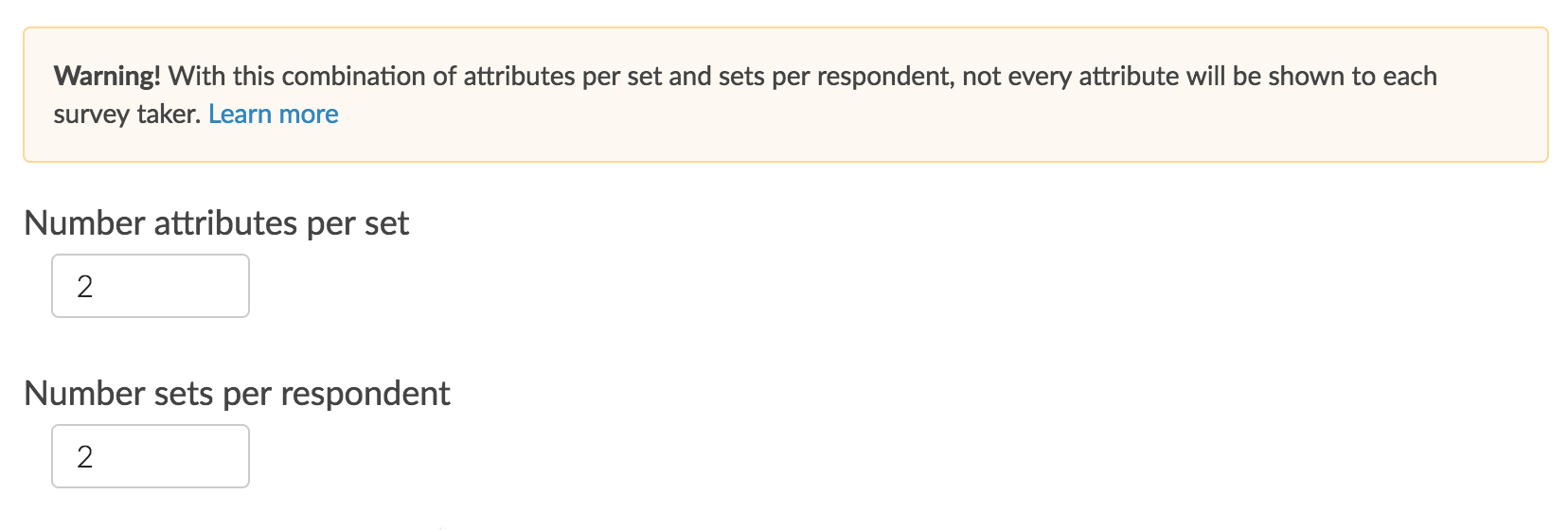

- Finally, above your attributes, customize the Number of attributes per set and the Number of sets per respondent. By default, your question will be setup to show 4 attributes and 6 sets. You will see the below warning as a result:

Warning! With this combination of attributes per set and sets per respondent, not every attribute will be shown to each survey taker.

We recommend setting up your question to at least show each attribute 3 to 5 times for each respondent. To do so, use one of the below equations; plug in the number of attributes (K) and number of attributes per set (k).

3 times per respondent: 3K/k = number of set to show4 times per respondent: 4K/k = number of set to show5 times per respondent: 5K/k = number of set to show

Survey Taking

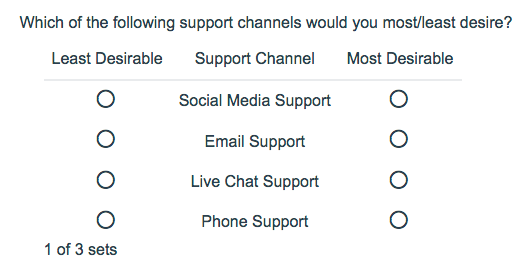

On a desktop and most laptops, the Max Diff question type looks like the below. Remember, your Max Diff question should be the only question on the page!

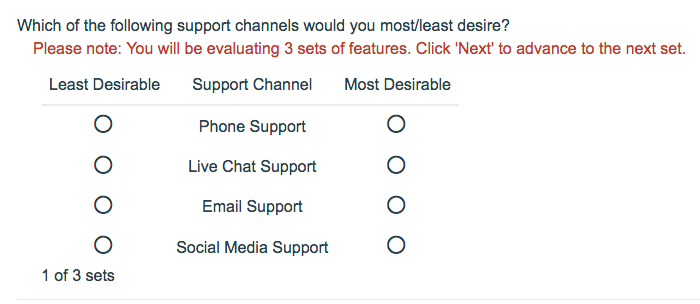

Best Practice Tip: Add Instructions

It is a good idea to add instructions to the question text so respondents are aware they are evaluating different sets. We also recommend displaying no more than 5 attributes at one time. In addition, by displaying a couple of options in multiple sets you will get more robust data!

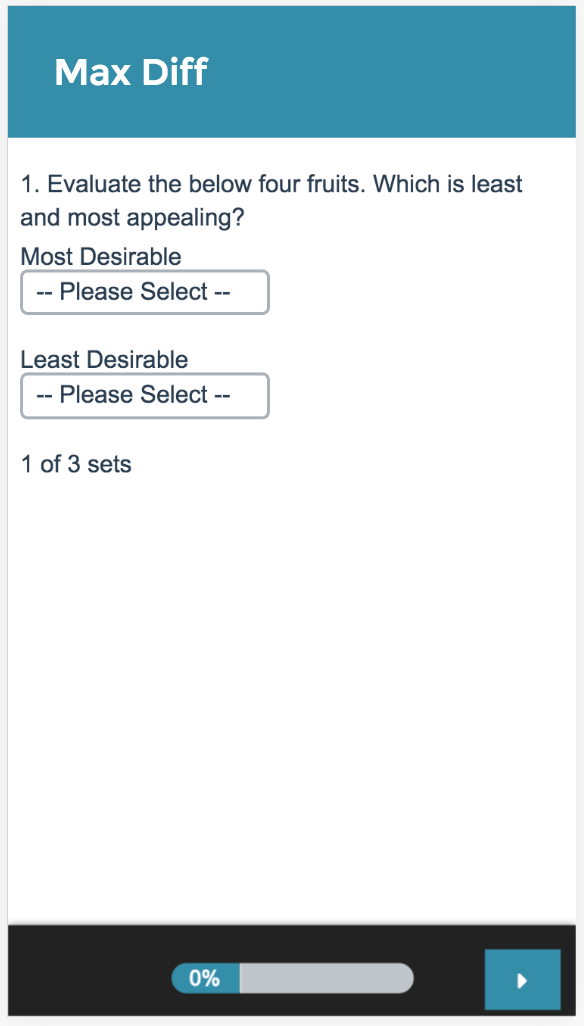

When optimized for mobile devices the Max Diff question type will convert your question to a mobile friendly format as pictured below.

By default, survey questions show one at a time on mobile devices to prevent the need for scrolling on smaller screens. You can turn off this one-at-a-time interaction if you wish.

Reporting

Standard Report

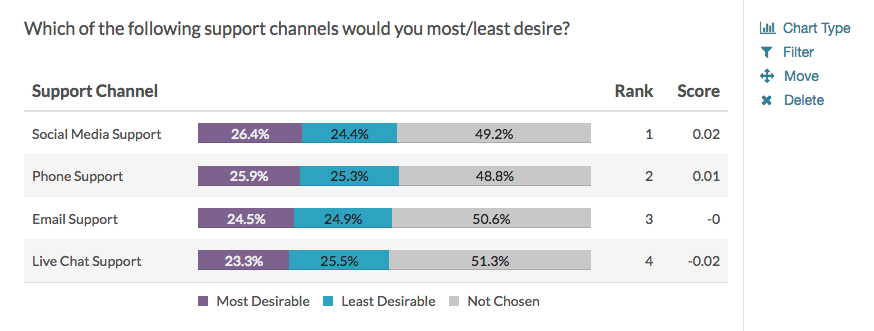

We have improved the reporting of the Max Diff question in the Standard Report! It's much easier to understand and allows for more visibility into how items were ranked. For each attribute you will see the percentage of times it was ranked as most appealing, least appealing, or not chosen.

The attributes will be ranked based on the score which is computed using the below formula:

# times attribute was selected as best - # times attribute was selected as worst

____________________________________________________________________________

# times the item appeared

From the score we can determine a couple of things:

- The higher the score, the more the feature is appealing to respondents.

- A positive score means that that attribute was selected as MOST appealing more often than least appealing.

- A negative score means that that attribute was chosen as LEAST appealing more often than most appealing.

- A score of zero means that that attribute was chosen as MOST and LEAST appealing an equal number of times OR it has never been chosen as most and least appealing.

- If a score of an item is two times bigger than another item, it can be interpreted that it is twice as appealing.

See additional compatible chart types

Within the Standard Report there are various chart types available for visualizing your data. The below grid shows which of the chart types Max Diff questions are compatible with.

See what other report types are compatible

The below grid shows whether Max Diff questions are compatible with each of our report types. If you plan to do some specific analysis within SurveyGizmo this report compatibility chart should help you choose the right question types.

| Report Type | Compatible |

|---|---|

| Standard | |

| Legacy Summary | |

| TURF | |

| Profile | |

| Crosstab | |

| Comparison |

Exporting

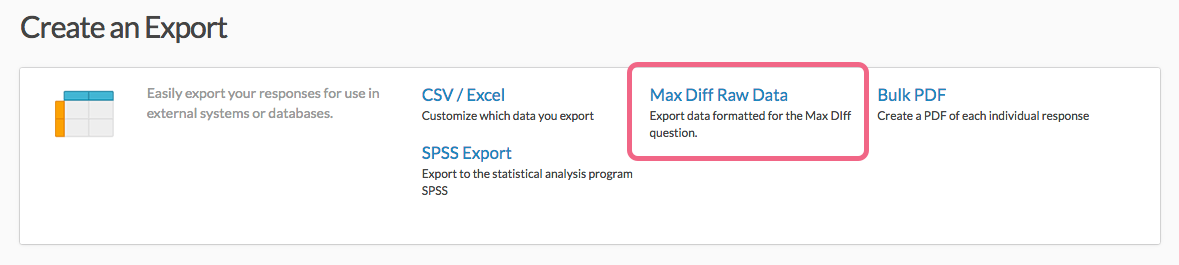

The Max Diff question has its own export which will be available under Results > Exports. Once there, select the Max Diff Raw Data option.

The Max Diff question does not export via the SPSS Export.

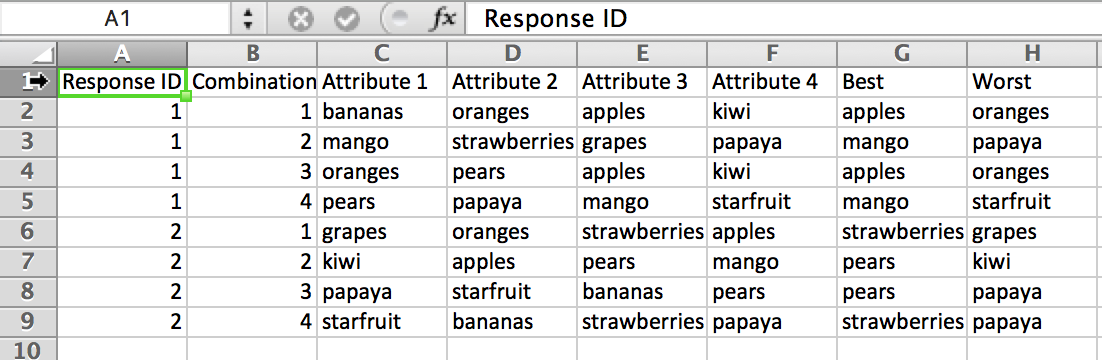

In the export, there will be a row per combination displayed to the respondent. There will be a column per attribute displayed in the combination and a Best and Worst column. The reporting values of your Max Diff question will populate the rows.

Looking below at Response ID 1, Combination 1 you can see that the following attributes were displayed to the respondent as their first combination: bananas, oranges, apples, kiwi. Apples were selected as best and oranges were selected as worst.

FAQ

Will each respondent see each attribute?

Not necessarily. The sets are selected somewhat randomly so it is possible for a respondent not to see every attribute in the list given the number of attributes, attributes per set, and number of sets displayed.

How is the experiment set up?

The attributes are randomized. From this randomized list, a number of attributes are selected creating a unique set. This process is repeated creating all of the possible number of sets. It also randomizes the order that the attributes are displayed for each given set. (Ex: Respondent 1 may see ABCD and Respondent 10 may see CABD. These are the same four attributes, but they are presented in a different order.)

Will sets be shown equally?

There is a check in place to look up how frequently a set has been shown to respondents. If a particular set has been shown more frequently than another set, it will be replaced with a less frequently viewed set.

How many responses do I need? How many sets should I show? How many attributes per set should I show?

We're answering these questions together as the answers are functions of each other. There's no hard and fast formula for answering these questions but here are some guidelines:

- Show four to five attributes per set.

- Show each attribute between 3 to 5 times on average per respondent across the sets.

- The number of sets to display so that each item is shown on average 3 times per respondent is equal to: 3(K/k) where K is the total number of attributes and k is the number of items per set.

- As far as number of responses, you generally need 200 response per segment* that you are targeting.

*This refers to segments in data analysis. If you plan to segment your max diff data, say by males and females, you will need the above number of responses for each segment.

If the attributes are displayed more than once in different sets, that will give you even better data. Some attributes could be ranked again against a different set of attributes, which can garner more information about its relative importance against a wider range of options.

Don't worry about whether each respondent sees all attributes. Instead, just collect a healthy number of responses; over a larger number of respondents all of the sets will be shown.

Can I hide the x per y sets that displays below my Max Diff question?

You can! To do so, go to the Style tab of your survey and scroll to the bottom of the survey preview and click the link to the CSS/HTML Editor. Then paste the following code on the Custom CSS tab.

.sg-maxdiff-progress {

display:none;

}

Admin

— Dave Domagalski on 06/13/2019

@Jared: Thank you for your question!

Yes, the image issue has been resolved. You can now add images as Max Diff attributes.

This article should be a good starting point for adding images as answer options:

https://help.surveygizmo.com/help/use-images-as-answer-options

I hope this helps!

David

Technical Writer

SurveyGizmo Customer Experience

— Jared on 06/12/2019

Hello - on 03/20/2018 @dave said there was an issue using images with the Max Diff question - has this been resolved?

Admin

— Bri Hillmer on 05/21/2018

@Ezequiel: "Utilities" are not part of the aggregate analysis for the Max Diff.

If you are referring to the aggregate scores that are provided as part of the report for Max Diff these are available for download.

In addition, you can export the raw data for Max Diff.

I hope this helps!

Bri Hillmer

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Ezequiel on 05/21/2018

Does Survey Gizmo report utilities when exporting to Excel? We need to work with utilities and also qith a segmentation of the data file

Admin

— Dave Domagalski on 03/20/2018

@Kenneth: This should be possible, though there is currently an issue preventing this from working properly.

The problem has been reported to our Development team; we hope to have a fix out soon!

I'm sorry for the trouble!

David

Documentation Specialist

SurveyGizmo Customer Experience

— Tom on 03/20/2018

is it possible to use images as attributes? Referring to an embed code from the library images does not seem to work.

Admin

— Bri Hillmer on 02/27/2018

@Karen: Within a given set, respondents should be able to change their answers, however, once that set is submitted there is not currently a way to allow respondents to navigate back to a previous set.

Bri Hillmer

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Suzanne on 02/27/2018

Can responders not change their answers on max/diff? If so, where do i adjust that setting?

Admin

— Bri Hillmer on 07/20/2017

@Christian: Thanks for this feedback!

The only way to prevent the Max Diff question from converting to the dropdown menus for selection is to turn off mobile optimization all together. To do so go to the Style tab. Select Layout > Mobile Interaction and select Not Mobile Optimized.

Of course, this would also turn off optimization for all questions, so probably not ideal. As such, I'll make sure to make note of this for discussion for possible future improvements with the development team.

I hope this helps!

Bri Hillmer

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Christian on 07/20/2017

I would like to show the participants a standard MaxDiff with radio buttons for mobile devices.

I have already switched the mobile interaction to "standard" but there is still this useless drop-down menu. Is there a possibility to deactivate the drop-down view on mobile devices?

Best regards,

Chris

Admin

— Bri Hillmer on 03/16/2017

@Jimmy: My pleasure!

Bri

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Jimmy on 03/16/2017

@Bri: I do agree that respondents need to see each attribute several times. I do appreciate you sharing your calculation; that is very helpful.

Admin

— Bri Hillmer on 03/16/2017

@Jimmy: Respondents should really see each attribute between 3 and 5 times.

The number of sets to display so that each item is shown on average 3 times per respondent is equal to: 3(K/k) where K is the total number of attributes and k is the number of items per set.

I hope this helps!

Bri

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Jimmy on 03/16/2017

Per our methodological understanding of MaxDiff, in order for any one case's derived importance to be considered valid, they need to have seen each concept at least once. Since each respondent is not guaranteed to see each attribute, do you have a good estimator for how many rotations should be used per respondent? In the end we will throw out any respondent that did not see all of the concepts. For example, say I want to test 16 concepts and display 4 concepts per set. Assuming that altering the size of sample is not an issue, what do you suggest is a good number of sets to show per respondent?

Thank you!

Admin

— Bri Hillmer on 11/30/2016

@Ben: The previous max diff export data is no longer available as it did not give users what they needed to analyze the data outside of SurveyGizmo which was a common request. The new export data will allow users to do so.

The reason that the data is not included in the main export is because there are multiple observations per response. I will pass along your feedback about needing to export per max diff question to our development team. Perhaps we can include all max diff questions in the max diff export.

Thanks for your feedback!

Bri

Documentation Coordinator

SurveyGizmo Customer Experience Team

— Ben on 11/29/2016

Is there anyway we can have the option to have the old exporting back, Why do we now have to export on a question by question basis?

Admin

— Bri Hillmer on 06/22/2016

@Gregorioetchevers: The export of the Max Diff question does not currently give you the raw data that you would need to compute a Bayesian average outside of SurveyGizmo. What you would need is what is available in the Individual Responses. Long term we're looking to modify the export to include the raw data for Max Diff though we do not currently have a timeline for this improvement.

As far as tools to compute this, I understand that SPSS has an extension.

Bri

Documentation Coordinator/Survey Sorceress

SurveyGizmo Customer Support

— Gregorioetchevers on 06/21/2016

What other software outside of SurveyGizmo can be used to compute bayesian average with the values exported in spss?

Admin

— Bri Hillmer on 11/25/2015

@Gregorioetchevers: I'm sorry to say that there is not currently a way to report on the Max Diff question type in this way. You might want to check out Sawtooth Software; their Max Diff analyzer reports probability I believe.

Sorry I don't have a better answer!

Bri

Documentation Coordinator/Survey Sorceress

SurveyGizmo Customer Support

— Gregorioetchevers on 11/25/2015

Can you tell me how can I see the ranking results in percentages from 0 to 100?

I must present the results in percentages. For a question about the probability of choosing certain attributes.

Thank you!